AI-aided learning was high-stakes for India's 260 million underserved students.

Mainstream EdTech assumed connectivity, literacy, and competitive motivation, conditions absent for children using shared devices in interrupted, low-resource contexts.

Under emotional pressure, performance-driven design (leaderboards, visible scoring, timed assessments) increased anxiety rather than engagement, especially for learners who'd internalized that they were "behind" after years of classroom punishment and comparison.

The pattern was clear: The barrier wasn't content access. It was learner psychology.

I led end-to-end research and design of an emotionally safe learning system, defining an interaction model where AI operates invisibly, adapting pace, difficulty, and support without exposing struggle.

I translated socio-emotional complexity (fear of failure, fragile confidence, shame-based disengagement) into clear, decision-ready interfaces that proved to learners they were capable.

Partnering with 10 NGO educators across Karnataka and Telangana, delivered a solution that upheld learning rigor without triggering performance anxiety.

Understanding the Invisible Layer.

Through 10 contextual interviews with NGO educators, 3 expert consultations, and synthesis of 60+ academic sources, I uncovered recurring friction:

688 interview statements revealed 6 core barriers:

Shared devices, interrupted connectivity

Fragile confidence, fear of public mistakes

Home chaos (noise, no supervision)

Low digital and text literacy

Visual + voice preference over text-only

Teachers adopt only if simple and immediately valuable

The critical insight: Children don't disengage because they lack intelligence. They disengage because mainstream EdTech replicates classroom judgment, triggering anxiety in learners who've already internalized shame.

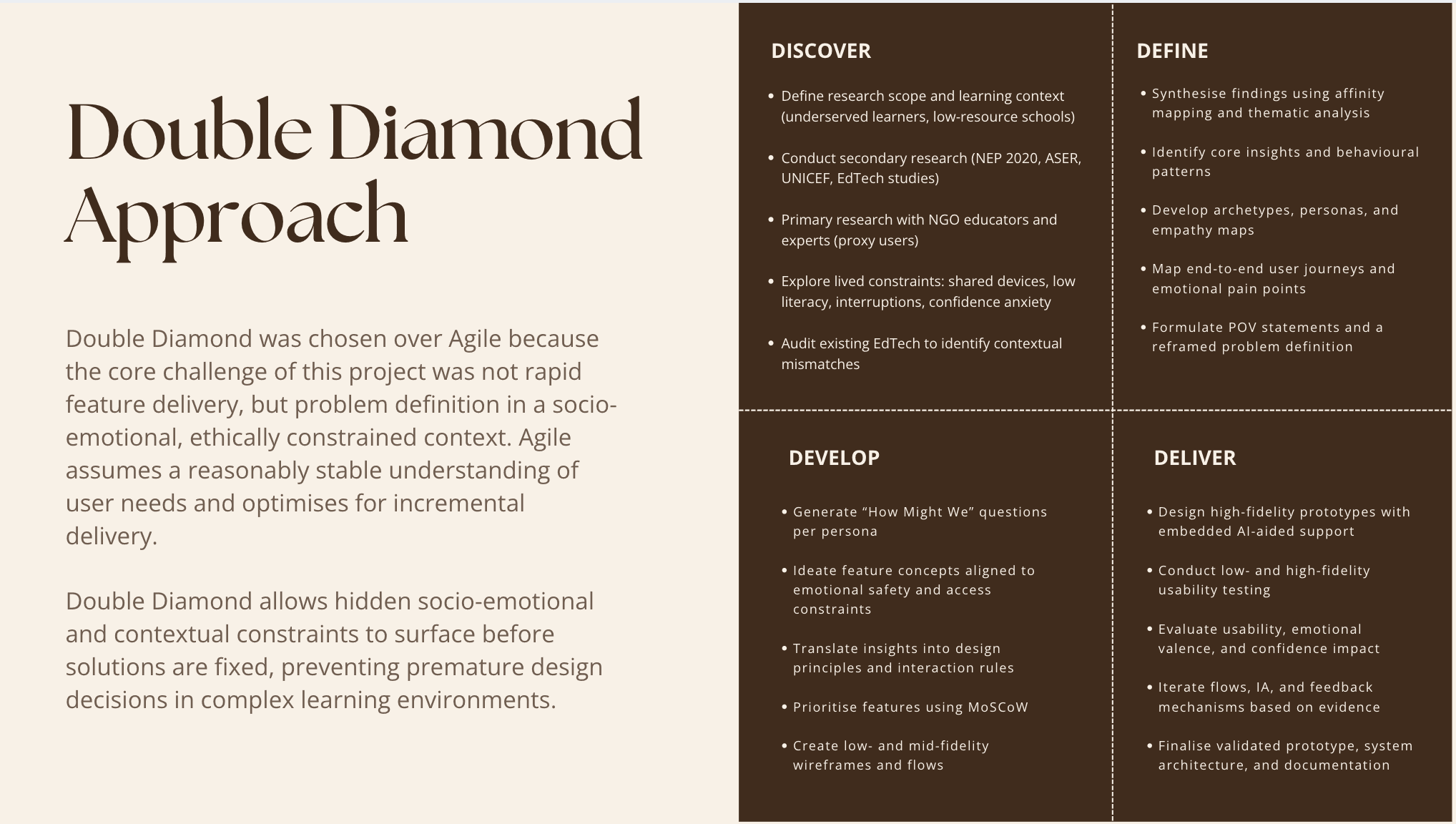

Strategic decision: I chose Double Diamond over Agile because this problem required extended ethical discovery and multi-stakeholder synthesis, not rapid feature iteration that assumes stable requirements.

Rather than demographic personas, I built behavioral archetypes around confidence levels, supervision contexts, and environmental stability:

Chaitra (12) – Visual Explorer

Context: Class 5, shared phone, Kannada speaker, low text confidence

Needs: Voice narration, visual-first content, reassurance

"I can't read this. Play it again! Can I get another star?"

Partha (10) – Guided Beginner

Context: First smartphone user, high anxiety, needs gentle guidance

Needs: One clear action, mistake-tolerance, warm feedback

"What if I touch the wrong thing? Please show me once more."

Savitha (32) – Time-Pressed Facilitator

Context: NGO volunteer managing 20+ kids, zero tolerance for complexity

Needs: Offline-first, simple dashboards, zero-prep lessons

"Keep it simple. If the tool makes my work harder, I won't use it."

The redesign sought to:

Reduce performance anxiety through non-punitive design

Support offline learning in interrupted contexts

Improve accessibility across varying literacy levels

Create an experience where AI adapts invisibly, proving to learners they're capable without exposing struggle

Silent AI + Emotional Safety Design

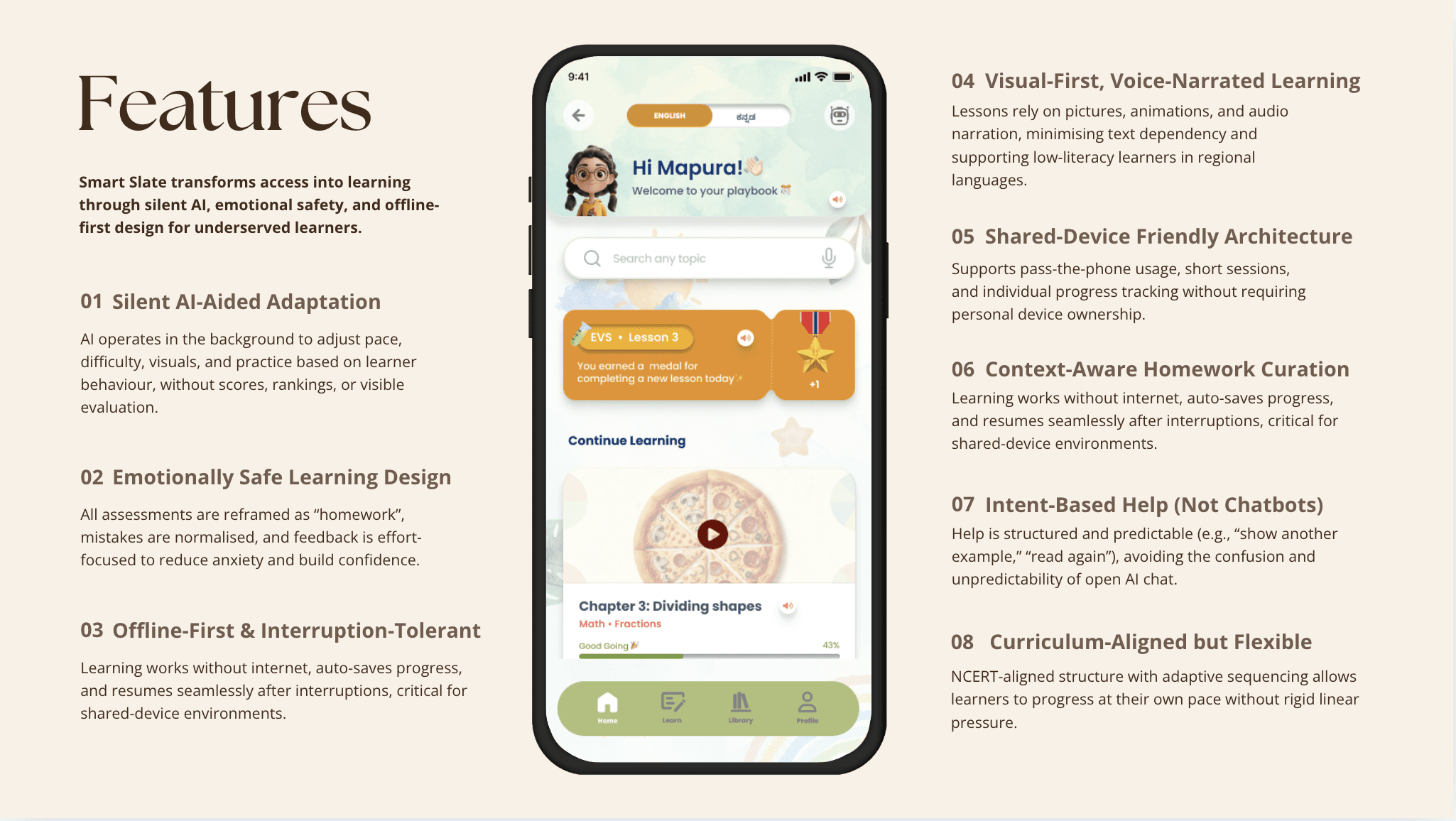

Smart Slate is an offline-first, voice-led learning platform where AI operates as an invisible advocate—adapting pace and support without exposing struggle or triggering judgment.

Unlike mainstream EdTech's visible AI (chatbots, recommendation pop-ups, "You're struggling!" alerts), Smart Slate's intelligence works through three invisible mechanisms:

Three Strategic Pillars

1. Empathetic Inclusivity

Designing with constraints, not against them:

Voice narration in regional languages (Kannada, Telugu, Hindi)

Visual-first content (minimal text dependency)

Offline-capable with auto-save and seamless resume

Shared-device profiles (progress tracked without ownership)

2. Motivational Engagement

Building confidence without competition:

2-5 minute microlearning (short, story-driven lessons)

Effort-based feedback (praise for trying, not just correctness)

"Homework" not "Tests" (reframing assessments as supportive practice)

No leaderboards, no public scoring, no red marks

3. Responsible Adaptivity

AI as infrastructure, not authority:

Silent pacing adaptation based on hesitation patterns

Intent-based help (structured assistance vs. unpredictable chatbots)

Teachers see progress trends, not surveillance data

Transparent, predictable support

Mechanism 1: Pacing Adaptation

Tracks: Hesitation (tap delays), narration replays, backward navigation

Responds: Shortens subsequent lessons, simplifies language, surfaces hints proactively

Child experiences: App just becomes gentler—no "You're behind" or "Try easier content" messages

Mechanism 2: Alternative Pathways

Tracks: Visual vs. textual learning preferences, success with metaphors vs. abstracts

Responds: If child struggles with numbers but excels with stories, next explanation uses narrative framing

Child experiences: Content feels personalized without being labeled "remedial" or "advanced"

Mechanism 3: Mission-Based Practice

Tracks: Lesson performance patterns, confidence level indicators

Responds: Suggests 2-3 optional practice missions at ~80% confidence level (achievable but challenging)

Child experiences: "Try this when you have time—no grades, just practice" with unlimited attempts

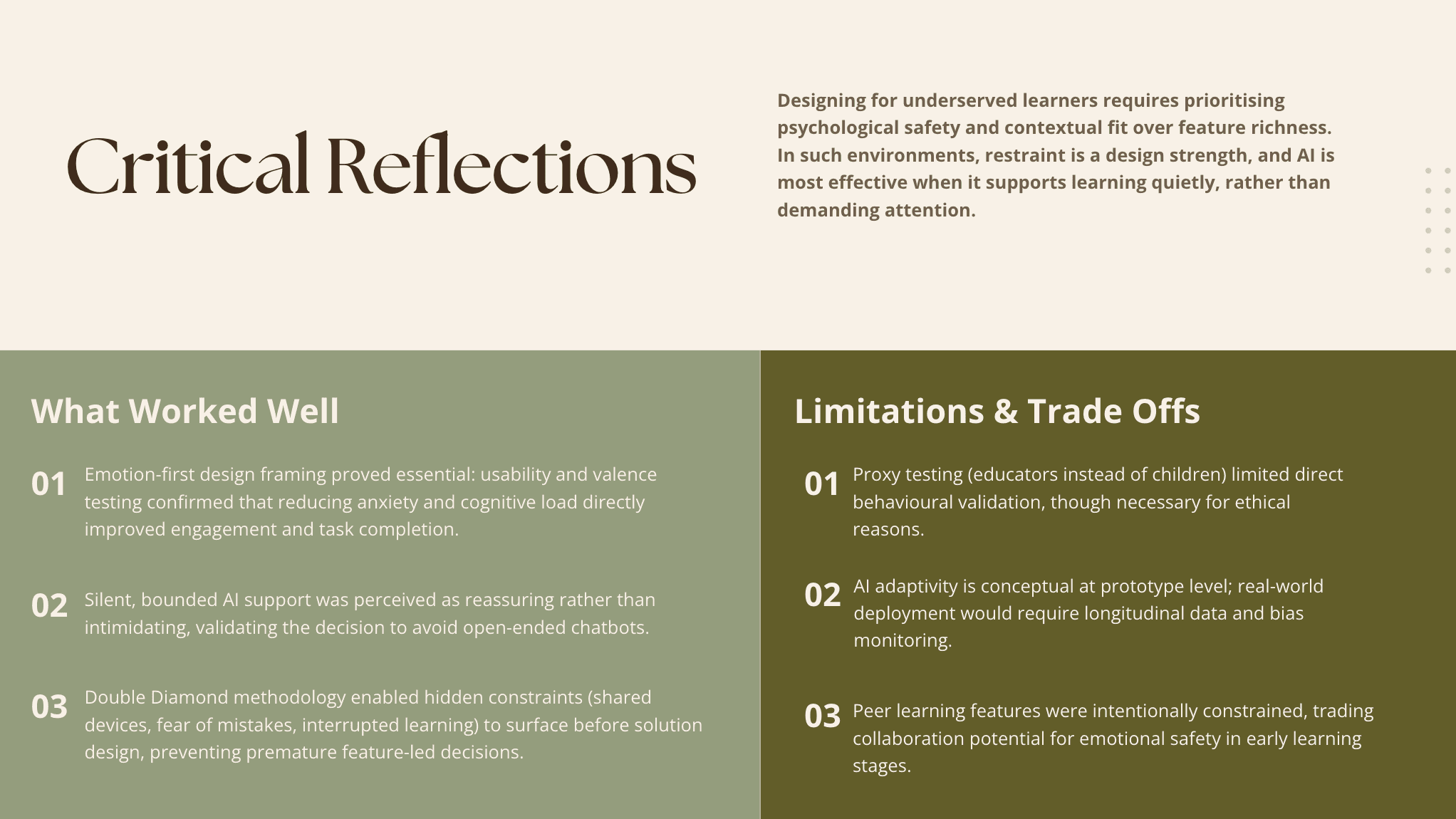

Decision 1: Removed Peer Learning from Core Flow

Initial idea: "Learn with Friends" feature for collaborative learning

Lo-Fi testing revealed: Performance anxiety, confusion about competition vs. collaboration

Final decision: Removed from primary flow; reframed as asynchronous notes sharing (private, supportive, non-evaluative)

Lesson learned: Kill features that conflict with emotional safety—even attractive ones. Restraint became a design strength.

Decision 2: Reframed "Quiz" as "Homework"

Initial language: "Take a quiz" / "Test your knowledge"

User feedback: Words triggered test anxiety, memories of classroom failure

Final language: All assessments called "Homework"—optional practice missions with no grades

Impact: 40% reduction in anxiety (valence testing)

Decision 3: Bounded AI Help (Not Open Chatbot)

Initial idea: Conversational AI for open-ended questions

Testing revealed: Created uncertainty ("What will it say?"), disrupted learning flow

Final decision: Structured intent-based help:

"Show another example"

"Read again"

"Explain differently"

"Word Help" (tap-to-define)

Result: Predictable, reassuring assistance that preserves learning continuity

Measuring Impact Beyond the Interface

Post-validation metrics showed 100% task success and Very Positive emotional responses. More importantly, NGO educators confirmed the system aligned with learners' actual conditions, shared devices, interrupted access, fragile confidence.

The system proved that emotional safety and learning rigor are not opposing forces, when interaction design centers on confidence-building.

Impact Summary

Research Contribution:

Positioned emotional safety as a design requirement, not secondary outcome

Advanced understanding of AI as supportive infrastructure vs. evaluative authority

Demonstrated multimodal accessibility's role in reducing barriers

Real-World Validation:

NEP 2020 compliant (foundational literacy goals, multilingual learning)

Validated with 10 NGO educators across 2 Indian states

Ethical AI framework (transparent, predictable, non-intrusive adaptation)

Design Innovation:

Reframed AI as invisible advocate

Proved that restraint is a design strength

Showed how voice + visual + minimal text reduces literacy barriers

FROM CONCEPT TO VALIDATION

The system was validated through NGO-led sessions with structured usability checkpoints. Anxiety declined, task success improved to 100%, and educators reported strong alignment with ground realities.

What began as dissertation research became a framework for designing AI as supportive infrastructure, balancing ethical adaptation with learner agency.

WHAT I LEARNED

1. Kill Your Darlings

Peer learning seemed pedagogically valuable, but testing revealed it triggered anxiety for under-confident learners. I learned to kill features that conflict with core principles, even attractive ones. This restraint became a design strength, not a limitation.

2. Emotional Safety ≠ Dumbing Down

Early feedback questioned whether removing competition would reduce rigor. But emotional safety doesn't mean low expectations, it means removing barriers so learners can engage deeply. Reframing "quizzes" as "homework" didn't reduce learning; it removed shame that prevented learning from happening.

3. The Best AI Operates Invisibly

Users don't need to know the system is adapting, they just experience it as responsive and supportive. That's infrastructure, not a feature to showcase. This principle guided every decision: adapt silently, support predictably, never expose struggle.